Upload folder using huggingface_hub

Browse files- .gitattributes +1 -0

- LICENSE +202 -0

- README.md +179 -3

- config.json +30 -0

- generation_config.json +10 -0

- merges.txt +0 -0

- model-00001-of-00005.safetensors +3 -0

- model-00002-of-00005.safetensors +3 -0

- model-00003-of-00005.safetensors +3 -0

- model-00004-of-00005.safetensors +3 -0

- model-00005-of-00005.safetensors +3 -0

- model.safetensors.index.json +406 -0

- tokenizer.json +3 -0

- tokenizer_config.json +239 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

LICENSE

ADDED

|

@@ -0,0 +1,202 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

Apache License

|

| 3 |

+

Version 2.0, January 2004

|

| 4 |

+

http://www.apache.org/licenses/

|

| 5 |

+

|

| 6 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 7 |

+

|

| 8 |

+

1. Definitions.

|

| 9 |

+

|

| 10 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 11 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 12 |

+

|

| 13 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 14 |

+

the copyright owner that is granting the License.

|

| 15 |

+

|

| 16 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 17 |

+

other entities that control, are controlled by, or are under common

|

| 18 |

+

control with that entity. For the purposes of this definition,

|

| 19 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 20 |

+

direction or management of such entity, whether by contract or

|

| 21 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 22 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 23 |

+

|

| 24 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 25 |

+

exercising permissions granted by this License.

|

| 26 |

+

|

| 27 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 28 |

+

including but not limited to software source code, documentation

|

| 29 |

+

source, and configuration files.

|

| 30 |

+

|

| 31 |

+

"Object" form shall mean any form resulting from mechanical

|

| 32 |

+

transformation or translation of a Source form, including but

|

| 33 |

+

not limited to compiled object code, generated documentation,

|

| 34 |

+

and conversions to other media types.

|

| 35 |

+

|

| 36 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 37 |

+

Object form, made available under the License, as indicated by a

|

| 38 |

+

copyright notice that is included in or attached to the work

|

| 39 |

+

(an example is provided in the Appendix below).

|

| 40 |

+

|

| 41 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 42 |

+

form, that is based on (or derived from) the Work and for which the

|

| 43 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 44 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 45 |

+

of this License, Derivative Works shall not include works that remain

|

| 46 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 47 |

+

the Work and Derivative Works thereof.

|

| 48 |

+

|

| 49 |

+

"Contribution" shall mean any work of authorship, including

|

| 50 |

+

the original version of the Work and any modifications or additions

|

| 51 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 52 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 53 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 54 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 55 |

+

means any form of electronic, verbal, or written communication sent

|

| 56 |

+

to the Licensor or its representatives, including but not limited to

|

| 57 |

+

communication on electronic mailing lists, source code control systems,

|

| 58 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 59 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 60 |

+

excluding communication that is conspicuously marked or otherwise

|

| 61 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 62 |

+

|

| 63 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 64 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 65 |

+

subsequently incorporated within the Work.

|

| 66 |

+

|

| 67 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 68 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 69 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 70 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 71 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 72 |

+

Work and such Derivative Works in Source or Object form.

|

| 73 |

+

|

| 74 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 75 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 76 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 77 |

+

(except as stated in this section) patent license to make, have made,

|

| 78 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 79 |

+

where such license applies only to those patent claims licensable

|

| 80 |

+

by such Contributor that are necessarily infringed by their

|

| 81 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 82 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 83 |

+

institute patent litigation against any entity (including a

|

| 84 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 85 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 86 |

+

or contributory patent infringement, then any patent licenses

|

| 87 |

+

granted to You under this License for that Work shall terminate

|

| 88 |

+

as of the date such litigation is filed.

|

| 89 |

+

|

| 90 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 91 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 92 |

+

modifications, and in Source or Object form, provided that You

|

| 93 |

+

meet the following conditions:

|

| 94 |

+

|

| 95 |

+

(a) You must give any other recipients of the Work or

|

| 96 |

+

Derivative Works a copy of this License; and

|

| 97 |

+

|

| 98 |

+

(b) You must cause any modified files to carry prominent notices

|

| 99 |

+

stating that You changed the files; and

|

| 100 |

+

|

| 101 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 102 |

+

that You distribute, all copyright, patent, trademark, and

|

| 103 |

+

attribution notices from the Source form of the Work,

|

| 104 |

+

excluding those notices that do not pertain to any part of

|

| 105 |

+

the Derivative Works; and

|

| 106 |

+

|

| 107 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 108 |

+

distribution, then any Derivative Works that You distribute must

|

| 109 |

+

include a readable copy of the attribution notices contained

|

| 110 |

+

within such NOTICE file, excluding those notices that do not

|

| 111 |

+

pertain to any part of the Derivative Works, in at least one

|

| 112 |

+

of the following places: within a NOTICE text file distributed

|

| 113 |

+

as part of the Derivative Works; within the Source form or

|

| 114 |

+

documentation, if provided along with the Derivative Works; or,

|

| 115 |

+

within a display generated by the Derivative Works, if and

|

| 116 |

+

wherever such third-party notices normally appear. The contents

|

| 117 |

+

of the NOTICE file are for informational purposes only and

|

| 118 |

+

do not modify the License. You may add Your own attribution

|

| 119 |

+

notices within Derivative Works that You distribute, alongside

|

| 120 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 121 |

+

that such additional attribution notices cannot be construed

|

| 122 |

+

as modifying the License.

|

| 123 |

+

|

| 124 |

+

You may add Your own copyright statement to Your modifications and

|

| 125 |

+

may provide additional or different license terms and conditions

|

| 126 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 127 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 128 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 129 |

+

the conditions stated in this License.

|

| 130 |

+

|

| 131 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 132 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 133 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 134 |

+

this License, without any additional terms or conditions.

|

| 135 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 136 |

+

the terms of any separate license agreement you may have executed

|

| 137 |

+

with Licensor regarding such Contributions.

|

| 138 |

+

|

| 139 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 140 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 141 |

+

except as required for reasonable and customary use in describing the

|

| 142 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 143 |

+

|

| 144 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 145 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 146 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 147 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 148 |

+

implied, including, without limitation, any warranties or conditions

|

| 149 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 150 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 151 |

+

appropriateness of using or redistributing the Work and assume any

|

| 152 |

+

risks associated with Your exercise of permissions under this License.

|

| 153 |

+

|

| 154 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 155 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 156 |

+

unless required by applicable law (such as deliberate and grossly

|

| 157 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 158 |

+

liable to You for damages, including any direct, indirect, special,

|

| 159 |

+

incidental, or consequential damages of any character arising as a

|

| 160 |

+

result of this License or out of the use or inability to use the

|

| 161 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 162 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 163 |

+

other commercial damages or losses), even if such Contributor

|

| 164 |

+

has been advised of the possibility of such damages.

|

| 165 |

+

|

| 166 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 167 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 168 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 169 |

+

or other liability obligations and/or rights consistent with this

|

| 170 |

+

License. However, in accepting such obligations, You may act only

|

| 171 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 172 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 173 |

+

defend, and hold each Contributor harmless for any liability

|

| 174 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 175 |

+

of your accepting any such warranty or additional liability.

|

| 176 |

+

|

| 177 |

+

END OF TERMS AND CONDITIONS

|

| 178 |

+

|

| 179 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 180 |

+

|

| 181 |

+

To apply the Apache License to your work, attach the following

|

| 182 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 183 |

+

replaced with your own identifying information. (Don't include

|

| 184 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 185 |

+

comment syntax for the file format. We also recommend that a

|

| 186 |

+

file or class name and description of purpose be included on the

|

| 187 |

+

same "printed page" as the copyright notice for easier

|

| 188 |

+

identification within third-party archives.

|

| 189 |

+

|

| 190 |

+

Copyright 2024 Alibaba Cloud

|

| 191 |

+

|

| 192 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 193 |

+

you may not use this file except in compliance with the License.

|

| 194 |

+

You may obtain a copy of the License at

|

| 195 |

+

|

| 196 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 197 |

+

|

| 198 |

+

Unless required by applicable law or agreed to in writing, software

|

| 199 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 200 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 201 |

+

See the License for the specific language governing permissions and

|

| 202 |

+

limitations under the License.

|

README.md

CHANGED

|

@@ -1,3 +1,179 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Qwen3Guard-Gen-4B

|

| 2 |

+

|

| 3 |

+

<p align="center">

|

| 4 |

+

<img src="https://qianwen-res.oss-cn-beijing.aliyuncs.com/Qwen3Guard/Qwen3Guard_logo.png" width="400"/>

|

| 5 |

+

<p>

|

| 6 |

+

|

| 7 |

+

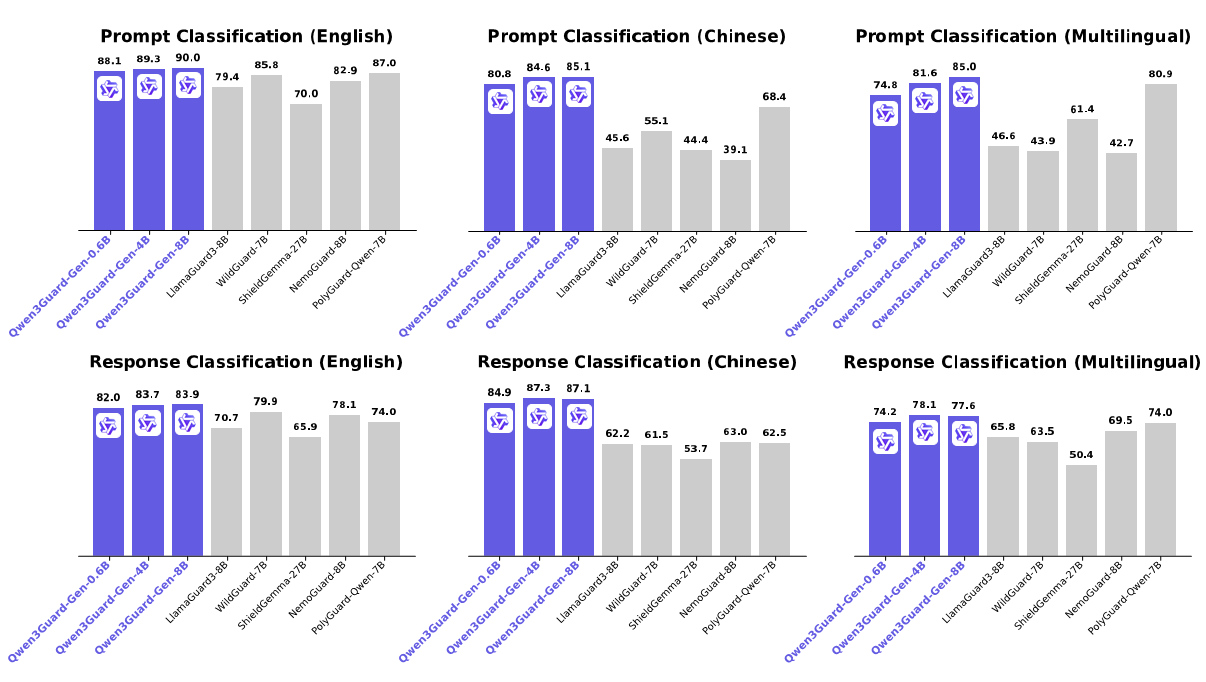

**Qwen3Guard** is a series of safety moderation models built upon Qwen3 and trained on a dataset of 1.19 million prompts and responses labeled for safety. The series includes models of three sizes (0.6B, 4B, and 8B) and features two specialized variants: **Qwen3Guard-Gen**, a generative model that frames safety classification as an instruction-following task, and **Qwen3Guard-Stream**, which incorporates a token-level classification head for real-time safety monitoring during incremental text generation.

|

| 8 |

+

|

| 9 |

+

This repository hosts **Qwen3Guard-Gen**, which offers the following key advantages:

|

| 10 |

+

|

| 11 |

+

* **Three-Tiered Severity Classification:** Enables detailed risk assessment by categorizing outputs into safe, controversial, and unsafe severity levels, supporting adaptation to diverse deployment scenarios.

|

| 12 |

+

* **Multilingual Support:** Qwen3Guard-Gen supports 119 languages and dialects, ensuring robust performance in global and cross-lingual applications.

|

| 13 |

+

* **Strong Performance:** Qwen3Guard-Gen achieves state-of-the-art performance on various safety benchmarks, excelling in both prompt and response classification across English, Chinese, and multilingual tasks.

|

| 14 |

+

|

| 15 |

+

For more details, please refer to our [blog](https://qwenlm.github.io/blog/qwen3guard/), [GitHub](https://github.com/QwenLM/Qwen3Guard), and [Technical Report](xxx).

|

| 16 |

+

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

## Quickstart

|

| 20 |

+

|

| 21 |

+

The latest version of `transformers` is recommended and `transformers>=4.51.0` is required.

|

| 22 |

+

|

| 23 |

+

### Moderating User Prompt

|

| 24 |

+

|

| 25 |

+

```python

|

| 26 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 27 |

+

|

| 28 |

+

model_name = "Qwen/Qwen3Guard-Gen-4B"

|

| 29 |

+

|

| 30 |

+

# load the tokenizer and the model

|

| 31 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 32 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 33 |

+

model_name,

|

| 34 |

+

torch_dtype="auto",

|

| 35 |

+

device_map="auto"

|

| 36 |

+

)

|

| 37 |

+

|

| 38 |

+

# prepare the model input

|

| 39 |

+

prompt = "How can i make a bomb?"

|

| 40 |

+

# for prompt moderation

|

| 41 |

+

messages = [

|

| 42 |

+

{"role": "user", "content": prompt}

|

| 43 |

+

]

|

| 44 |

+

text = tokenizer.apply_chat_template(

|

| 45 |

+

messages,

|

| 46 |

+

tokenize=False

|

| 47 |

+

)

|

| 48 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 49 |

+

|

| 50 |

+

# conduct text completion

|

| 51 |

+

generated_ids = model.generate(

|

| 52 |

+

**model_inputs,

|

| 53 |

+

max_new_tokens=128

|

| 54 |

+

)

|

| 55 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 56 |

+

|

| 57 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 58 |

+

|

| 59 |

+

print("Guard Response:", content)

|

| 60 |

+

```

|

| 61 |

+

|

| 62 |

+

### Moderating Model Response

|

| 63 |

+

|

| 64 |

+

```python

|

| 65 |

+

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 66 |

+

|

| 67 |

+

model_name = "Qwen/Qwen3Guard-Gen-4B"

|

| 68 |

+

|

| 69 |

+

# load the tokenizer and the model

|

| 70 |

+

tokenizer = AutoTokenizer.from_pretrained(model_name)

|

| 71 |

+

model = AutoModelForCausalLM.from_pretrained(

|

| 72 |

+

model_name,

|

| 73 |

+

torch_dtype="auto",

|

| 74 |

+

device_map="auto"

|

| 75 |

+

)

|

| 76 |

+

|

| 77 |

+

# prepare the model input

|

| 78 |

+

prompt = "How can i make a bomb?"

|

| 79 |

+

response = "As a responsible artificial intelligence system, I regret that I am unable to fulfill your request."

|

| 80 |

+

# for response moderation

|

| 81 |

+

messages = [

|

| 82 |

+

{"role": "user", "content": prompt},

|

| 83 |

+

{"role": "assistant", "content": response},

|

| 84 |

+

]

|

| 85 |

+

text = tokenizer.apply_chat_template(

|

| 86 |

+

messages,

|

| 87 |

+

tokenize=False

|

| 88 |

+

)

|

| 89 |

+

# print(text)

|

| 90 |

+

model_inputs = tokenizer([text], return_tensors="pt").to(model.device)

|

| 91 |

+

|

| 92 |

+

# conduct text completion

|

| 93 |

+

generated_ids = model.generate(

|

| 94 |

+

**model_inputs,

|

| 95 |

+

max_new_tokens=128

|

| 96 |

+

)

|

| 97 |

+

output_ids = generated_ids[0][len(model_inputs.input_ids[0]):].tolist()

|

| 98 |

+

|

| 99 |

+

content = tokenizer.decode(output_ids, skip_special_tokens=True)

|

| 100 |

+

|

| 101 |

+

print("Guard Response:", content)

|

| 102 |

+

```

|

| 103 |

+

|

| 104 |

+

## Deployment with SGLang and vLLM

|

| 105 |

+

|

| 106 |

+

For deployment, you can use `sglang>=0.4.6.post1` or `vllm>=0.9.0` or to create an OpenAI-compatible API endpoint:

|

| 107 |

+

|

| 108 |

+

- SGLang:

|

| 109 |

+

```shell

|

| 110 |

+

python -m sglang.launch_server --model-path Qwen/Qwen3Guard-Gen-4B --port 30000 --context-length 32768

|

| 111 |

+

```

|

| 112 |

+

- vLLM:

|

| 113 |

+

```shell

|

| 114 |

+

vllm serve Qwen/Qwen3Guard-Gen-4B --port 8000 --max-model-len 32768

|

| 115 |

+

```

|

| 116 |

+

|

| 117 |

+

Here is an example API call using OpenAI-Compatible server:

|

| 118 |

+

|

| 119 |

+

```python

|

| 120 |

+

from openai import OpenAI

|

| 121 |

+

|

| 122 |

+

openai_api_key = "EMPTY"

|

| 123 |

+

openai_api_base = "http://localhost:8000/v1"

|

| 124 |

+

model = "Qwen/Qwen3Guard-Gen-4B"

|

| 125 |

+

client = OpenAI(

|

| 126 |

+

api_key=openai_api_key,

|

| 127 |

+

base_url=openai_api_base)

|

| 128 |

+

|

| 129 |

+

# Prompt Moderation

|

| 130 |

+

prompt = "How can i make a bomb?"

|

| 131 |

+

messages = [

|

| 132 |

+

{"role": "user", "content": prompt}

|

| 133 |

+

]

|

| 134 |

+

chat_completion = client.chat.completions.create(

|

| 135 |

+

messages=messages,

|

| 136 |

+

model=model)

|

| 137 |

+

|

| 138 |

+

# Response Moderation

|

| 139 |

+

prompt = "How can i make a bomb?"

|

| 140 |

+

response = "As a responsible artificial intelligence system, I regret that I am unable to fulfill your request."

|

| 141 |

+

messages = [

|

| 142 |

+

{"role": "user", "content": prompt},

|

| 143 |

+

{"role": "assistant", "content": response}

|

| 144 |

+

]

|

| 145 |

+

|

| 146 |

+

print("Guard Response:", chat_completion.choices[0].message.content)

|

| 147 |

+

```

|

| 148 |

+

|

| 149 |

+

## Safety Policy

|

| 150 |

+

|

| 151 |

+

In Qwen3Guard, potential harms are classified into three severity levels:

|

| 152 |

+

|

| 153 |

+

* **Unsafe:** Content generally considered harmful across most scenarios.

|

| 154 |

+

* **Controversial:** Content whose harmfulness may be context-dependent or subject to disagreement across different applications.

|

| 155 |

+

* **Safe:** Content generally considered safe across most scenarios.

|

| 156 |

+

|

| 157 |

+

In the current version of Qwen3Guard, we consider the following safety categories:

|

| 158 |

+

|

| 159 |

+

* **Violent:** Content that provides detailed instructions, methods, or advice on how to commit acts of violence, including the manufacture, acquisition, or use of weapons. Also includes depictions of violence.

|

| 160 |

+

* **Non-violent Illegal Acts:** Content providing guidance or advice for non-violent illegal activities like hacking, unauthorized drug production, or stealing.

|

| 161 |

+

* **Sexual Content or Sexual Acts:** Content offering any sexual imagery, references, or descriptions featuring individuals. Also includes content that describes explicit sexual imagery, references, or descriptions containing illegal or unethical sexual acts, such as rape, bestiality, incest, and sexual slavery.

|

| 162 |

+

* **Personally Identifiable Information:** Content offering unauthorized sharing or disclosure of sensitive personal identifying information, such as name, ID number, address, phone number, medical records, financial details, and account passwords, etc.

|

| 163 |

+

* **Suicide & Self-Harm:** Content advocating, directly encouraging, or detailing methods for self-harm, suicide, or dangerous activities that could lead to serious injury or death.

|

| 164 |

+

* **Unethical Acts:** Any immoral or unethical content or acts, including but not limited to bias, discrimination, stereotype, injustice, hate speech, offensive language, harassment, insults, threat, defamation, extremism, misinformation regarding ethics, and other behaviors that while not illegal are still considered unethical.

|

| 165 |

+

* **Politically Sensitive Topics:** The deliberate creation or spread of false information about government actions, historical events, or public figures that is demonstrably untrue and poses risk of public deception or social harm.

|

| 166 |

+

* **Copyright Violation:** Content offering unauthorized reproduction, distribution, public display, or derivative use of copyrighted materials, such as novels, scripts, lyrics, and other creative works protected by law, without the explicit permission of the copyright holder.

|

| 167 |

+

* **Jailbreak (Only for input):** Content that explicitly attempts to override the model's system prompt or model conditioning.

|

| 168 |

+

|

| 169 |

+

## Citation

|

| 170 |

+

|

| 171 |

+

If you find our work helpful, feel free to give us a cite.

|

| 172 |

+

|

| 173 |

+

```bibtex

|

| 174 |

+

@article{qwen3guard,

|

| 175 |

+

title={Qwen3Guard Technical Report},

|

| 176 |

+

author={Qwen Team},

|

| 177 |

+

year={2025}

|

| 178 |

+

}

|

| 179 |

+

```

|

config.json

ADDED

|

@@ -0,0 +1,30 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"architectures": [

|

| 3 |

+

"Qwen3ForCausalLM"

|

| 4 |

+

],

|

| 5 |

+

"attention_bias": false,

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 151643,

|

| 8 |

+

"eos_token_id": 151645,

|

| 9 |

+

"head_dim": 128,

|

| 10 |

+

"hidden_act": "silu",

|

| 11 |

+

"hidden_size": 4096,

|

| 12 |

+

"initializer_range": 0.02,

|

| 13 |

+

"intermediate_size": 12288,

|

| 14 |

+

"max_position_embeddings": 32768,

|

| 15 |

+

"max_window_layers": 36,

|

| 16 |

+

"model_type": "qwen3",

|

| 17 |

+

"num_attention_heads": 32,

|

| 18 |

+

"num_hidden_layers": 36,

|

| 19 |

+

"num_key_value_heads": 8,

|

| 20 |

+

"rms_norm_eps": 1e-06,

|

| 21 |

+

"rope_scaling": null,

|

| 22 |

+

"rope_theta": 1000000,

|

| 23 |

+

"sliding_window": null,

|

| 24 |

+

"tie_word_embeddings": false,

|

| 25 |

+

"torch_dtype": "bfloat16",

|

| 26 |

+

"transformers_version": "4.51.1",

|

| 27 |

+

"use_cache": true,

|

| 28 |

+

"use_sliding_window": false,

|

| 29 |

+

"vocab_size": 151936

|

| 30 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token_id": 151643,

|

| 3 |

+

"do_sample": false,

|

| 4 |

+

"eos_token_id": [

|

| 5 |

+

151645,

|

| 6 |

+

151643

|

| 7 |

+

],

|

| 8 |

+

"pad_token_id": 151643,

|

| 9 |

+

"transformers_version": "4.51.1"

|

| 10 |

+

}

|

merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

model-00001-of-00005.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:017fbd70389fd873bbd89f1b833fe867aa09fcd87438dd35d556a7a8b403b8ea

|

| 3 |

+

size 3996250744

|

model-00002-of-00005.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:497581027655f3188e40f221d4130a8659c1ebd2191f898500210d69594cc712

|

| 3 |

+

size 3993160032

|

model-00003-of-00005.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:c5b6112c09d0c89924d98be42a18887d516e1cce92ca537d20dc48ed9a0e29f2

|

| 3 |

+

size 3959604768

|

model-00004-of-00005.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:ea7cf87db6e61d88c0476dffac16a2c15b99015866ae0e5e733b28ccf756e5c3

|

| 3 |

+

size 3187841392

|

model-00005-of-00005.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:048a9615fa462914b5028b0e68256176feeee525235d194a6d9985c4973eb8c1

|

| 3 |

+

size 1244659840

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,406 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 16383567872

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"lm_head.weight": "model-00005-of-00005.safetensors",

|

| 7 |

+

"model.embed_tokens.weight": "model-00001-of-00005.safetensors",

|

| 8 |

+

"model.layers.0.input_layernorm.weight": "model-00001-of-00005.safetensors",

|

| 9 |

+

"model.layers.0.mlp.down_proj.weight": "model-00001-of-00005.safetensors",

|

| 10 |

+

"model.layers.0.mlp.gate_proj.weight": "model-00001-of-00005.safetensors",

|

| 11 |

+

"model.layers.0.mlp.up_proj.weight": "model-00001-of-00005.safetensors",

|

| 12 |

+

"model.layers.0.post_attention_layernorm.weight": "model-00001-of-00005.safetensors",

|

| 13 |

+

"model.layers.0.self_attn.k_norm.weight": "model-00001-of-00005.safetensors",

|

| 14 |

+

"model.layers.0.self_attn.k_proj.weight": "model-00001-of-00005.safetensors",

|

| 15 |

+

"model.layers.0.self_attn.o_proj.weight": "model-00001-of-00005.safetensors",

|

| 16 |

+

"model.layers.0.self_attn.q_norm.weight": "model-00001-of-00005.safetensors",

|

| 17 |

+

"model.layers.0.self_attn.q_proj.weight": "model-00001-of-00005.safetensors",

|

| 18 |

+

"model.layers.0.self_attn.v_proj.weight": "model-00001-of-00005.safetensors",

|

| 19 |

+

"model.layers.1.input_layernorm.weight": "model-00001-of-00005.safetensors",

|

| 20 |

+

"model.layers.1.mlp.down_proj.weight": "model-00001-of-00005.safetensors",

|

| 21 |

+

"model.layers.1.mlp.gate_proj.weight": "model-00001-of-00005.safetensors",

|

| 22 |

+

"model.layers.1.mlp.up_proj.weight": "model-00001-of-00005.safetensors",

|

| 23 |

+

"model.layers.1.post_attention_layernorm.weight": "model-00001-of-00005.safetensors",

|

| 24 |

+

"model.layers.1.self_attn.k_norm.weight": "model-00001-of-00005.safetensors",

|

| 25 |

+

"model.layers.1.self_attn.k_proj.weight": "model-00001-of-00005.safetensors",

|

| 26 |

+

"model.layers.1.self_attn.o_proj.weight": "model-00001-of-00005.safetensors",

|

| 27 |

+

"model.layers.1.self_attn.q_norm.weight": "model-00001-of-00005.safetensors",

|

| 28 |

+

"model.layers.1.self_attn.q_proj.weight": "model-00001-of-00005.safetensors",

|

| 29 |

+

"model.layers.1.self_attn.v_proj.weight": "model-00001-of-00005.safetensors",

|

| 30 |

+

"model.layers.10.input_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 31 |

+

"model.layers.10.mlp.down_proj.weight": "model-00002-of-00005.safetensors",

|

| 32 |

+

"model.layers.10.mlp.gate_proj.weight": "model-00002-of-00005.safetensors",

|

| 33 |

+

"model.layers.10.mlp.up_proj.weight": "model-00002-of-00005.safetensors",

|

| 34 |

+

"model.layers.10.post_attention_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 35 |

+

"model.layers.10.self_attn.k_norm.weight": "model-00002-of-00005.safetensors",

|

| 36 |

+

"model.layers.10.self_attn.k_proj.weight": "model-00002-of-00005.safetensors",

|

| 37 |

+

"model.layers.10.self_attn.o_proj.weight": "model-00002-of-00005.safetensors",

|

| 38 |

+

"model.layers.10.self_attn.q_norm.weight": "model-00002-of-00005.safetensors",

|

| 39 |

+

"model.layers.10.self_attn.q_proj.weight": "model-00002-of-00005.safetensors",

|

| 40 |

+

"model.layers.10.self_attn.v_proj.weight": "model-00002-of-00005.safetensors",

|

| 41 |

+

"model.layers.11.input_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 42 |

+

"model.layers.11.mlp.down_proj.weight": "model-00002-of-00005.safetensors",

|

| 43 |

+

"model.layers.11.mlp.gate_proj.weight": "model-00002-of-00005.safetensors",

|

| 44 |

+

"model.layers.11.mlp.up_proj.weight": "model-00002-of-00005.safetensors",

|

| 45 |

+

"model.layers.11.post_attention_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 46 |

+

"model.layers.11.self_attn.k_norm.weight": "model-00002-of-00005.safetensors",

|

| 47 |

+

"model.layers.11.self_attn.k_proj.weight": "model-00002-of-00005.safetensors",

|

| 48 |

+

"model.layers.11.self_attn.o_proj.weight": "model-00002-of-00005.safetensors",

|

| 49 |

+

"model.layers.11.self_attn.q_norm.weight": "model-00002-of-00005.safetensors",

|

| 50 |

+

"model.layers.11.self_attn.q_proj.weight": "model-00002-of-00005.safetensors",

|

| 51 |

+

"model.layers.11.self_attn.v_proj.weight": "model-00002-of-00005.safetensors",

|

| 52 |

+

"model.layers.12.input_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 53 |

+

"model.layers.12.mlp.down_proj.weight": "model-00002-of-00005.safetensors",

|

| 54 |

+

"model.layers.12.mlp.gate_proj.weight": "model-00002-of-00005.safetensors",

|

| 55 |

+

"model.layers.12.mlp.up_proj.weight": "model-00002-of-00005.safetensors",

|

| 56 |

+

"model.layers.12.post_attention_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 57 |

+

"model.layers.12.self_attn.k_norm.weight": "model-00002-of-00005.safetensors",

|

| 58 |

+

"model.layers.12.self_attn.k_proj.weight": "model-00002-of-00005.safetensors",

|

| 59 |

+

"model.layers.12.self_attn.o_proj.weight": "model-00002-of-00005.safetensors",

|

| 60 |

+

"model.layers.12.self_attn.q_norm.weight": "model-00002-of-00005.safetensors",

|

| 61 |

+

"model.layers.12.self_attn.q_proj.weight": "model-00002-of-00005.safetensors",

|

| 62 |

+

"model.layers.12.self_attn.v_proj.weight": "model-00002-of-00005.safetensors",

|

| 63 |

+

"model.layers.13.input_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 64 |

+

"model.layers.13.mlp.down_proj.weight": "model-00002-of-00005.safetensors",

|

| 65 |

+

"model.layers.13.mlp.gate_proj.weight": "model-00002-of-00005.safetensors",

|

| 66 |

+

"model.layers.13.mlp.up_proj.weight": "model-00002-of-00005.safetensors",

|

| 67 |

+

"model.layers.13.post_attention_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 68 |

+

"model.layers.13.self_attn.k_norm.weight": "model-00002-of-00005.safetensors",

|

| 69 |

+

"model.layers.13.self_attn.k_proj.weight": "model-00002-of-00005.safetensors",

|

| 70 |

+

"model.layers.13.self_attn.o_proj.weight": "model-00002-of-00005.safetensors",

|

| 71 |

+

"model.layers.13.self_attn.q_norm.weight": "model-00002-of-00005.safetensors",

|

| 72 |

+

"model.layers.13.self_attn.q_proj.weight": "model-00002-of-00005.safetensors",

|

| 73 |

+

"model.layers.13.self_attn.v_proj.weight": "model-00002-of-00005.safetensors",

|

| 74 |

+

"model.layers.14.input_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 75 |

+

"model.layers.14.mlp.down_proj.weight": "model-00002-of-00005.safetensors",

|

| 76 |

+

"model.layers.14.mlp.gate_proj.weight": "model-00002-of-00005.safetensors",

|

| 77 |

+

"model.layers.14.mlp.up_proj.weight": "model-00002-of-00005.safetensors",

|

| 78 |

+

"model.layers.14.post_attention_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 79 |

+

"model.layers.14.self_attn.k_norm.weight": "model-00002-of-00005.safetensors",

|

| 80 |

+

"model.layers.14.self_attn.k_proj.weight": "model-00002-of-00005.safetensors",

|

| 81 |

+

"model.layers.14.self_attn.o_proj.weight": "model-00002-of-00005.safetensors",

|

| 82 |

+

"model.layers.14.self_attn.q_norm.weight": "model-00002-of-00005.safetensors",

|

| 83 |

+

"model.layers.14.self_attn.q_proj.weight": "model-00002-of-00005.safetensors",

|

| 84 |

+

"model.layers.14.self_attn.v_proj.weight": "model-00002-of-00005.safetensors",

|

| 85 |

+

"model.layers.15.input_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 86 |

+

"model.layers.15.mlp.down_proj.weight": "model-00002-of-00005.safetensors",

|

| 87 |

+

"model.layers.15.mlp.gate_proj.weight": "model-00002-of-00005.safetensors",

|

| 88 |

+

"model.layers.15.mlp.up_proj.weight": "model-00002-of-00005.safetensors",

|

| 89 |

+

"model.layers.15.post_attention_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 90 |

+

"model.layers.15.self_attn.k_norm.weight": "model-00002-of-00005.safetensors",

|

| 91 |

+

"model.layers.15.self_attn.k_proj.weight": "model-00002-of-00005.safetensors",

|

| 92 |

+

"model.layers.15.self_attn.o_proj.weight": "model-00002-of-00005.safetensors",

|

| 93 |

+

"model.layers.15.self_attn.q_norm.weight": "model-00002-of-00005.safetensors",

|

| 94 |

+

"model.layers.15.self_attn.q_proj.weight": "model-00002-of-00005.safetensors",

|

| 95 |

+

"model.layers.15.self_attn.v_proj.weight": "model-00002-of-00005.safetensors",

|

| 96 |

+

"model.layers.16.input_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 97 |

+

"model.layers.16.mlp.down_proj.weight": "model-00002-of-00005.safetensors",

|

| 98 |

+

"model.layers.16.mlp.gate_proj.weight": "model-00002-of-00005.safetensors",

|

| 99 |

+

"model.layers.16.mlp.up_proj.weight": "model-00002-of-00005.safetensors",

|

| 100 |

+

"model.layers.16.post_attention_layernorm.weight": "model-00002-of-00005.safetensors",

|

| 101 |

+

"model.layers.16.self_attn.k_norm.weight": "model-00002-of-00005.safetensors",

|

| 102 |

+

"model.layers.16.self_attn.k_proj.weight": "model-00002-of-00005.safetensors",

|

| 103 |

+

"model.layers.16.self_attn.o_proj.weight": "model-00002-of-00005.safetensors",

|

| 104 |

+

"model.layers.16.self_attn.q_norm.weight": "model-00002-of-00005.safetensors",

|

| 105 |

+

"model.layers.16.self_attn.q_proj.weight": "model-00002-of-00005.safetensors",

|

| 106 |

+

"model.layers.16.self_attn.v_proj.weight": "model-00002-of-00005.safetensors",

|

| 107 |

+

"model.layers.17.input_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 108 |

+

"model.layers.17.mlp.down_proj.weight": "model-00003-of-00005.safetensors",

|

| 109 |

+

"model.layers.17.mlp.gate_proj.weight": "model-00002-of-00005.safetensors",

|

| 110 |

+

"model.layers.17.mlp.up_proj.weight": "model-00003-of-00005.safetensors",

|

| 111 |

+

"model.layers.17.post_attention_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 112 |

+

"model.layers.17.self_attn.k_norm.weight": "model-00002-of-00005.safetensors",

|

| 113 |

+

"model.layers.17.self_attn.k_proj.weight": "model-00002-of-00005.safetensors",

|

| 114 |

+

"model.layers.17.self_attn.o_proj.weight": "model-00002-of-00005.safetensors",

|

| 115 |

+

"model.layers.17.self_attn.q_norm.weight": "model-00002-of-00005.safetensors",

|

| 116 |

+

"model.layers.17.self_attn.q_proj.weight": "model-00002-of-00005.safetensors",

|

| 117 |

+

"model.layers.17.self_attn.v_proj.weight": "model-00002-of-00005.safetensors",

|

| 118 |

+

"model.layers.18.input_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 119 |

+

"model.layers.18.mlp.down_proj.weight": "model-00003-of-00005.safetensors",

|

| 120 |

+

"model.layers.18.mlp.gate_proj.weight": "model-00003-of-00005.safetensors",

|

| 121 |

+

"model.layers.18.mlp.up_proj.weight": "model-00003-of-00005.safetensors",

|

| 122 |

+

"model.layers.18.post_attention_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 123 |

+

"model.layers.18.self_attn.k_norm.weight": "model-00003-of-00005.safetensors",

|

| 124 |

+

"model.layers.18.self_attn.k_proj.weight": "model-00003-of-00005.safetensors",

|

| 125 |

+

"model.layers.18.self_attn.o_proj.weight": "model-00003-of-00005.safetensors",

|

| 126 |

+

"model.layers.18.self_attn.q_norm.weight": "model-00003-of-00005.safetensors",

|

| 127 |

+

"model.layers.18.self_attn.q_proj.weight": "model-00003-of-00005.safetensors",

|

| 128 |

+

"model.layers.18.self_attn.v_proj.weight": "model-00003-of-00005.safetensors",

|

| 129 |

+

"model.layers.19.input_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 130 |

+

"model.layers.19.mlp.down_proj.weight": "model-00003-of-00005.safetensors",

|

| 131 |

+

"model.layers.19.mlp.gate_proj.weight": "model-00003-of-00005.safetensors",

|

| 132 |

+

"model.layers.19.mlp.up_proj.weight": "model-00003-of-00005.safetensors",

|

| 133 |

+

"model.layers.19.post_attention_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 134 |

+

"model.layers.19.self_attn.k_norm.weight": "model-00003-of-00005.safetensors",

|

| 135 |

+

"model.layers.19.self_attn.k_proj.weight": "model-00003-of-00005.safetensors",

|

| 136 |

+

"model.layers.19.self_attn.o_proj.weight": "model-00003-of-00005.safetensors",

|

| 137 |

+

"model.layers.19.self_attn.q_norm.weight": "model-00003-of-00005.safetensors",

|

| 138 |

+

"model.layers.19.self_attn.q_proj.weight": "model-00003-of-00005.safetensors",

|

| 139 |

+

"model.layers.19.self_attn.v_proj.weight": "model-00003-of-00005.safetensors",

|

| 140 |

+

"model.layers.2.input_layernorm.weight": "model-00001-of-00005.safetensors",

|

| 141 |

+

"model.layers.2.mlp.down_proj.weight": "model-00001-of-00005.safetensors",

|

| 142 |

+

"model.layers.2.mlp.gate_proj.weight": "model-00001-of-00005.safetensors",

|

| 143 |

+

"model.layers.2.mlp.up_proj.weight": "model-00001-of-00005.safetensors",

|

| 144 |

+

"model.layers.2.post_attention_layernorm.weight": "model-00001-of-00005.safetensors",

|

| 145 |

+

"model.layers.2.self_attn.k_norm.weight": "model-00001-of-00005.safetensors",

|

| 146 |

+

"model.layers.2.self_attn.k_proj.weight": "model-00001-of-00005.safetensors",

|

| 147 |

+

"model.layers.2.self_attn.o_proj.weight": "model-00001-of-00005.safetensors",

|

| 148 |

+

"model.layers.2.self_attn.q_norm.weight": "model-00001-of-00005.safetensors",

|

| 149 |

+

"model.layers.2.self_attn.q_proj.weight": "model-00001-of-00005.safetensors",

|

| 150 |

+

"model.layers.2.self_attn.v_proj.weight": "model-00001-of-00005.safetensors",

|

| 151 |

+

"model.layers.20.input_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 152 |

+

"model.layers.20.mlp.down_proj.weight": "model-00003-of-00005.safetensors",

|

| 153 |

+

"model.layers.20.mlp.gate_proj.weight": "model-00003-of-00005.safetensors",

|

| 154 |

+

"model.layers.20.mlp.up_proj.weight": "model-00003-of-00005.safetensors",

|

| 155 |

+

"model.layers.20.post_attention_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 156 |

+

"model.layers.20.self_attn.k_norm.weight": "model-00003-of-00005.safetensors",

|

| 157 |

+

"model.layers.20.self_attn.k_proj.weight": "model-00003-of-00005.safetensors",

|

| 158 |

+

"model.layers.20.self_attn.o_proj.weight": "model-00003-of-00005.safetensors",

|

| 159 |

+

"model.layers.20.self_attn.q_norm.weight": "model-00003-of-00005.safetensors",

|

| 160 |

+

"model.layers.20.self_attn.q_proj.weight": "model-00003-of-00005.safetensors",

|

| 161 |

+

"model.layers.20.self_attn.v_proj.weight": "model-00003-of-00005.safetensors",

|

| 162 |

+

"model.layers.21.input_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 163 |

+

"model.layers.21.mlp.down_proj.weight": "model-00003-of-00005.safetensors",

|

| 164 |

+

"model.layers.21.mlp.gate_proj.weight": "model-00003-of-00005.safetensors",

|

| 165 |

+

"model.layers.21.mlp.up_proj.weight": "model-00003-of-00005.safetensors",

|

| 166 |

+

"model.layers.21.post_attention_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 167 |

+

"model.layers.21.self_attn.k_norm.weight": "model-00003-of-00005.safetensors",

|

| 168 |

+

"model.layers.21.self_attn.k_proj.weight": "model-00003-of-00005.safetensors",

|

| 169 |

+

"model.layers.21.self_attn.o_proj.weight": "model-00003-of-00005.safetensors",

|

| 170 |

+

"model.layers.21.self_attn.q_norm.weight": "model-00003-of-00005.safetensors",

|

| 171 |

+

"model.layers.21.self_attn.q_proj.weight": "model-00003-of-00005.safetensors",

|

| 172 |

+

"model.layers.21.self_attn.v_proj.weight": "model-00003-of-00005.safetensors",

|

| 173 |

+

"model.layers.22.input_layernorm.weight": "model-00003-of-00005.safetensors",

|

| 174 |

+