Upload 9 files

Browse files- .gitattributes +4 -0

- Precision-confidence.png +3 -0

- Precision-recall.png +3 -0

- README.md +49 -0

- Recall-confidence.png +0 -0

- config.json +47 -0

- confusion-matrix.png +3 -0

- model.onnx +3 -0

- model.pt +3 -0

- result.png +3 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,7 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

confusion-matrix.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

Precision-confidence.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

Precision-recall.png filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

result.png filter=lfs diff=lfs merge=lfs -text

|

Precision-confidence.png

ADDED

|

Git LFS Details

|

Precision-recall.png

ADDED

|

Git LFS Details

|

README.md

ADDED

|

@@ -0,0 +1,49 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

base_model:

|

| 4 |

+

- Ultralytics/YOLO11

|

| 5 |

+

pipeline_tag: object-detection

|

| 6 |

+

tags:

|

| 7 |

+

- pytorch

|

| 8 |

+

---

|

| 9 |

+

|

| 10 |

+

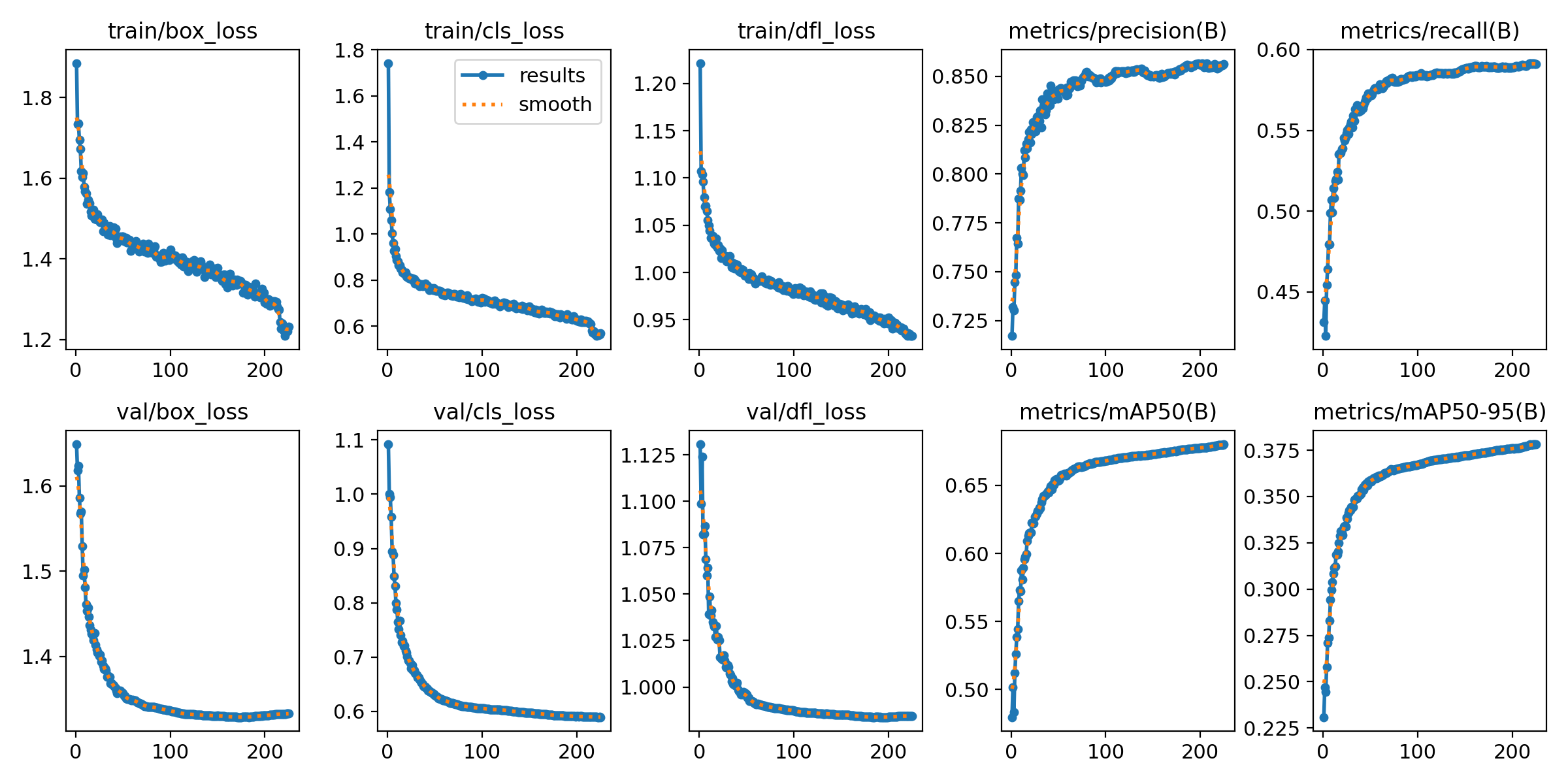

## YOLOv11n-Face-Detection

|

| 11 |

+

|

| 12 |

+

A lightweight face detection model based on YOLO architecture ([YOLOv11 nano](https://huggingface.co/Ultralytics/YOLO11)), trained for 225 epochs on the WIDERFACE dataset.

|

| 13 |

+

|

| 14 |

+

It achieves the following results on the evaluation set:

|

| 15 |

+

|

| 16 |

+

```

|

| 17 |

+

==================== Results ====================

|

| 18 |

+

Easy Val AP: 0.9420471677096086

|

| 19 |

+

Medium Val AP: 0.9210357271019756

|

| 20 |

+

Hard Val AP: 0.8099848364072022

|

| 21 |

+

=================================================

|

| 22 |

+

```

|

| 23 |

+

|

| 24 |

+

YOLO results:

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

|

| 28 |

+

[Confusion matrix](https://huggingface.co/AdamCodd/YOLOv11-face-detection/blob/main/confusion-matrix.png):

|

| 29 |

+

|

| 30 |

+

[[23577 2878]

|

| 31 |

+

|

| 32 |

+

[16098 0]]

|

| 33 |

+

|

| 34 |

+

### Usage

|

| 35 |

+

```python

|

| 36 |

+

from huggingface_hub import hf_hub_download

|

| 37 |

+

from ultralytics import YOLO

|

| 38 |

+

|

| 39 |

+

model_path = hf_hub_download(repo_id="AdamCodd/YOLOv11n-face-detection", filename="model.pt")

|

| 40 |

+

model = YOLO(model_path)

|

| 41 |

+

|

| 42 |

+

results = model.predict("/path/to/your/image", save=True) # saves the result in runs/detect/predict

|

| 43 |

+

```

|

| 44 |

+

|

| 45 |

+

### Limitations

|

| 46 |

+

|

| 47 |

+

- Performance may vary in extreme lighting conditions

|

| 48 |

+

- Best suited for frontal and slightly angled faces

|

| 49 |

+

- Optimal performance for faces occupying >20 pixels

|

Recall-confidence.png

ADDED

|

config.json

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"model": {

|

| 3 |

+

"type": "YOLO11n",

|

| 4 |

+

"pretrained": true,

|

| 5 |

+

"num_classes": 1,

|

| 6 |

+

"input_size": 640

|

| 7 |

+

},

|

| 8 |

+

"dataset": {

|

| 9 |

+

"name": "WIDER FACE",

|

| 10 |

+

"train": {

|

| 11 |

+

"images": "WIDER_train/images",

|

| 12 |

+

"annotations": "WIDER_train/annotations"

|

| 13 |

+

},

|

| 14 |

+

"val": {

|

| 15 |

+

"images": "WIDER_val/images",

|

| 16 |

+

"annotations": "WIDER_val/annotations"

|

| 17 |

+

}

|

| 18 |

+

},

|

| 19 |

+

"training": {

|

| 20 |

+

"epochs": 225,

|

| 21 |

+

"batch_size": 16,

|

| 22 |

+

"learning_rate": 0.001,

|

| 23 |

+

"optimizer": "Adam",

|

| 24 |

+

"momentum": 0.9,

|

| 25 |

+

"weight_decay": 0.0005,

|

| 26 |

+

"scheduler": {

|

| 27 |

+

"type": "StepLR",

|

| 28 |

+

"step_size": 30,

|

| 29 |

+

"gamma": 0.1

|

| 30 |

+

}

|

| 31 |

+

},

|

| 32 |

+

"augmentation": {

|

| 33 |

+

"horizontal_flip": true,

|

| 34 |

+

"vertical_flip": false,

|

| 35 |

+

"rotation": 10,

|

| 36 |

+

"scale": [0.8, 1.2],

|

| 37 |

+

"shear": 2

|

| 38 |

+

},

|

| 39 |

+

"evaluation": {

|

| 40 |

+

"interval": 1,

|

| 41 |

+

"metrics": ["mAP", "precision", "recall"]

|

| 42 |

+

},

|

| 43 |

+

"save": {

|

| 44 |

+

"checkpoint_dir": "checkpoints/",

|

| 45 |

+

"best_model": "model.pth"

|

| 46 |

+

}

|

| 47 |

+

}

|

confusion-matrix.png

ADDED

|

Git LFS Details

|

model.onnx

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2dfe14171f5b76a05f9bcf0dac7f94b7bff4416b1f29eff7c9ef5830f51c5719

|

| 3 |

+

size 10583429

|

model.pt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f5b3aaa834fd0acde177a0e84577458baa079d1ae5e9f500a9d03ca7d3a54c81

|

| 3 |

+

size 5469715

|

result.png

ADDED

|

Git LFS Details

|