Nemoretriever Graphic Element v1

Model Overview

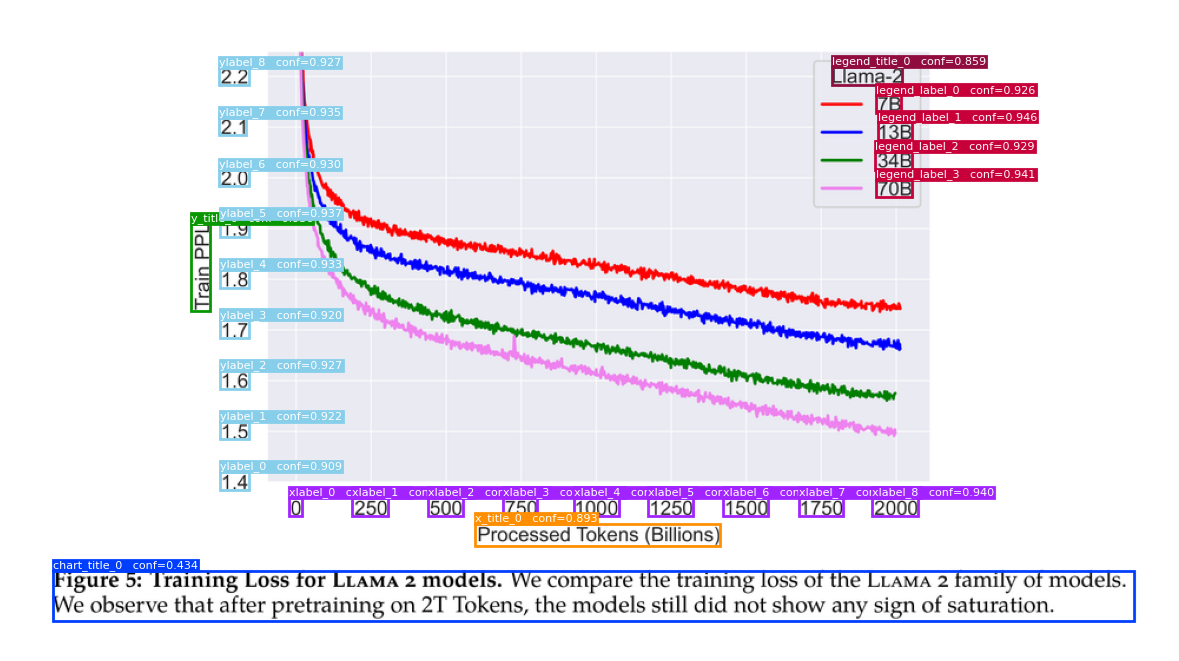

Preview of the model output on the example image.

Preview of the model output on the example image.

The input of this model is expected to be a chart image. You can use the Nemoretriever Page Element v3 to detect and crop such images.

Description

The NeMo Retriever Graphic Elements v1 model is a specialized object detection system designed to identify and extract key elements from charts and graphs. Based on YOLOX, an anchor-free version of YOLO (You Only Look Once), this model combines a simpler architecture with enhanced performance. While the underlying technology builds upon work from Megvii Technology, we developed our own base model through complete retraining rather than using pre-trained weights.

The model excels at detecting and localizing various graphic elements within chart images, including titles, axis labels, legends, and data point annotations. This capability makes it particularly valuable for document understanding tasks and automated data extraction from visual content.

This model is ready for commercial/non-commercial use.

We are excited to announce the open sourcing of this commercial model. For users interested in deploying this model in production environments, it is also available via the model API in NVIDIA Inference Microservices (NIM) at nemoretriever-graphic-elements-v1.

License/Terms of use

The use of this model is governed by the NVIDIA Open Model License Agreement and the use of the post-processing scripts are licensed under Apache 2.0.

Team

- Theo Viel

- Bo Liu

- Darragh Hanley

- Even Oldridge

Correspondence to Theo Viel ([email protected]) and Bo Liu ([email protected])

Deployment Geography

Global

Use Case

The NeMo Retriever Graphic Elements v1 is designed for automating extraction of graphic elements of charts in enterprise documents. Key applications include:

- Enterprise document extraction, embedding and indexing

- Augmenting Retrieval Augmented Generation (RAG) workflows with multimodal retrieval

- Data extraction from legacy documents and reports

Release Date

10/23/2025 via https://huggingface.co/nvidia/nemoretriever-graphic-elements-v1

References

- YOLOX paper: https://arxiv.org/abs/2107.08430

- YOLOX repo: https://github.com/Megvii-BaseDetection/YOLOX

- CACHED paper: https://arxiv.org/abs/2305.04151

- CACHED repo : https://github.com/pengyu965/ChartDete

- Technical blog: https://developer.nvidia.com/blog/approaches-to-pdf-data-extraction-for-information-retrieval/

Model Architecture

Architecture Type: YOLOX

Network Architecture: DarkNet53 Backbone + FPN Decoupled head (one 1x1 convolution + 2 parallel 3x3 convolutions (one for the classification and one for the bounding box prediction). YOLOX is a single-stage object detector that improves on Yolo-v3.

This model was developed based on the Yolo architecture

Number of model parameters: 5.4e7

Input

Input Type(s): Image

Input Format(s): Red, Green, Blue (RGB)

Input Parameters: Two-Dimensional (2D)

Other Properties Related to Input: Image size resized to (1024, 1024)

Output

Output Type(s): Array

Output Format: A dictionary of dictionaries containing np.ndarray objects. The outer dictionary has entries for each sample (page), and the inner dictionary contains a list of dictionaries, each with a bounding box (np.ndarray), class label, and confidence score for that page.

Output Parameters: One-Dimensional (1D)

Other Properties Related to Output: The output contains bounding boxes, detection confidence scores, and object classes (chart title, x/y axis titles and labels, legend title and labels, marker labels, value labels and other texts). The thresholds used for non-maximum suppression are conf_thresh=0.01 and iou_thresh=0.25.

Output Classes:

- Chart title

- Title or caption associated to the chart

- x-axis title

- Title associated to the x axis

- y-axis title

- Title associated to the y axis

- x-axis label(s)

- Labels associated to the x axis

- y-axis label(s)

- Labels associated to the y axis

- Legend title

- Title of the legend

- Legend label(s)

- Labels associated to the legend

- Marker label(s)

- Labels associated to markers

- Value label(s)

- Labels associated to values

- Other

- Miscellaneous other text components

Our AI models are designed and/or optimized to run on NVIDIA GPU-accelerated systems. By leveraging NVIDIA’s hardware (e.g. GPU cores) and software frameworks (e.g., CUDA libraries), the model achieves faster training and inference times compared to CPU-only solutions.

Usage

The model requires torch, and the custom code available in this repository.

- Clone the repository

- Make sure git-lfs is installed (https://git-lfs.com)

git lfs install

- Using https

git clone https://huggingface.co/nvidia/nemoretriever-graphic-elements-v1

- Or using ssh

git clone [email protected]:nvidia/nemoretriever-graphic-elements-v1

- Run the model using the following code:

import torch

import numpy as np

import matplotlib.pyplot as plt

from PIL import Image

from model import define_model

from utils import plot_sample, postprocess_preds_graphic_element, reformat_for_plotting

# Load image

path = "./example.png"

img = Image.open(path).convert("RGB")

img = np.array(img)

# Load model

model = define_model("graphic_element_v1")

# Inference

with torch.inference_mode():

x = model.preprocess(img)

preds = model(x, img.shape)[0]

print(preds)

# Post-processing

boxes, labels, scores = postprocess_preds_graphic_element(preds, model.threshold, model.labels)

# Plot

boxes_plot, confs = reformat_for_plotting(boxes, labels, scores, img.shape, model.num_classes)

plt.figure(figsize=(15, 10))

plot_sample(img, boxes_plot, confs, labels=model.labels)

plt.show()

Note that this repository only provides minimal code to infer the model. If you wish to do additional training, refer to the original repo.

- Advanced post-processing

Additional post-processing might be required to use the model as part of a data extraction pipeline.

We provide examples in the notebook Demo.ipynb.

Model Version(s):

nemoretriever-graphic-elements-v1

Training and Evaluation Datasets:

Training Dataset

Data Modality: Image

Image Training Data Size: Less than a Million Images

Data collection method by dataset: Automated

Labeling method by dataset: Hybrid: Automated, Human

Pretraining (by NVIDIA): 118,287 images of the COCO train2017 dataset

Finetuning (by NVIDIA): 5,614 images from the PubMed Central (PMC) Chart Dataset. 9,091 images from the DeepRule Dataset with annotations obtained using the CACHED model

Number of bounding boxes per class:

| Label | Images | Boxes |

|---|---|---|

| chart_title | 9,487 | 18,754 |

| x_title | 5,995 | 9,152 |

| y_title | 8,487 | 12,893 |

| xlabel | 13,227 | 217,820 |

| ylabel | 12,983 | 172,431 |

| legend_title | 168 | 209 |

| legend_label | 9,812 | 59,044 |

| mark_label | 660 | 2,887 |

| value_label | 3,573 | 65,847 |

| other | 3,717 | 29,565 |

| Total | 14,143 | 588,602 |

Evaluation Dataset

Results were evaluated using the PMC Chart dataset. The Mean Average Precision (mAP) was used as the evaluation metric to measure the model's ability to correctly identify and localize objects across different confidence thresholds.

Number of bounding boxes and images per class:

| Label | Images | Boxes |

|---|---|---|

| chart_title | 38 | 38 |

| x_title | 404 | 437 |

| y_title | 502 | 505 |

| xlabel | 553 | 4,091 |

| ylabel | 534 | 3,944 |

| legend_title | 17 | 19 |

| legend_label | 318 | 1,077 |

| mark_label | 42 | 219 |

| value_label | 52 | 726 |

| other | 113 | 464 |

| Total | 560 | 11,520 |

Data collection method by dataset: Hybrid: Automated, Human

Labeling method by dataset: Hybrid: Automated, Human

Properties: The validation dataset is the same as the PMC Chart dataset.

Per-class Performance Metrics:

| Class | AP (%) | AR (%) |

|---|---|---|

| chart_title | 82.38 | 93.16 |

| x_title | 88.77 | 92.31 |

| y_title | 89.48 | 92.32 |

| xlabel | 85.04 | 88.93 |

| ylabel | 86.22 | 89.40 |

| other | 55.14 | 79.48 |

| legend_label | 84.09 | 88.07 |

| legend_title | 60.61 | 68.42 |

| mark_label | 49.31 | 73.61 |

| value_label | 62.66 | 68.32 |

Ethical Considerations

NVIDIA believes Trustworthy AI is a shared responsibility and we have established policies and practices to enable development for a wide array of AI applications. When downloaded or used in accordance with our terms of service, developers should work with their internal model team to ensure this model meets requirements for the relevant industry and use case and addresses unforeseen product misuse.

For more detailed information on ethical considerations for this model, please see the Explainability, Bias, Safety & Security, and Privacy sections below.

Please report security vulnerabilities or NVIDIA AI Concerns here.

Bias

| Field | Response |

|---|---|

| Participation considerations from adversely impacted groups protected classes in model design and testing | None |

| Measures taken to mitigate against unwanted bias | None |

Explainability

| Field | Response |

|---|---|

| Intended Application & Domain: | Object Detection |

| Model Type: | YOLOX-architecture for detection of graphic elements within images of charts. |

| Intended User: | Enterprise developers, data scientists, and other technical users who need to extract textual elements from charts and graphs. |

| Output: | After post-processing, the output is three numpy array that contains the detections: boxes [N x 4] (format is normalized (x_min, y_min, x_max, y_max)), associated classes: labels [N] and confidence scores: scores [N]. |

| Describe how the model works: | Finds and identifies objects in images by first dividing the image into a grid. For each section of the grid, the model uses a series of neural networks to extract visual features and simultaneously predict what objects are present (in this case "chart title" or "axis label" etc.) and exactly where they are located in that section, all in a single pass through the image. |

| Name the adversely impacted groups this has been tested to deliver comparable outcomes regardless of: | Not Applicable |

| Technical Limitations & Mitigation: | The model may not generalize to unknown chart types/formats. Further fine-tuning might be required for such images. |

| Verified to have met prescribed NVIDIA quality standards: | Yes |

| Performance Metrics: | Mean Average Precision, detectionr recall and visual inspection |

| Potential Known Risks: | This model may not always detect all elements in a document. |

| Licensing & Terms of Use: | Use of this model is governed by the NVIDIA Open Model License Agreement and the Apache 2.0 License. |

Privacy

| Field | Response |

|---|---|

| Generatable or reverse engineerable personal data? | No |

| Personal data used to create this model? | No |

| Was consent obtained for any personal data used? | Not Applicable |

| How often is the dataset reviewed? | Before Release |

| Is there provenance for all datasets used in training? | Yes |

| Does data labeling (annotation, metadata) comply with privacy laws? | Yes |

| Is data compliant with data subject requests for data correction or removal, if such a request was made? | No, not possible with externally-sourced data. |

| Applicable Privacy Policy | https://www.nvidia.com/en-us/about-nvidia/privacy-policy/ |

Safety

| Field | Response |

|---|---|

| Model Application Field(s): | Object Detection for Retrieval, focused on Enterprise |

| Describe the life critical impact (if present). | Not Applicable |

| Use Case Restrictions: | Abide by NVIDIA Open Model License Agreement. |

| Model and dataset restrictions: | The Principle of least privilege (PoLP) is applied limiting access for dataset generation and model development. Restrictions enforce dataset access during training, and dataset license constraints adhered to. |